Trust

Why Trust Science?

Revising facts in light of new evidence isn't a weakness. It's a superpower.

Posted October 9, 2021 Reviewed by Vanessa Lancaster

Key points

- People tend to believe most strongly in things that are, in reality, the most uncertain.

- Science is a method of making repeated observations while weeding out subjective biases to arrive at objective truths.

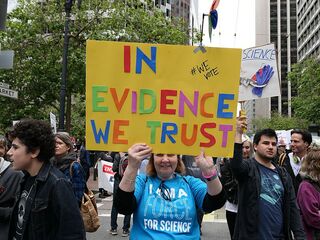

- Trust in science doesn't come naturally and must be earned.

In an era where personal truth is often valued above facts, it has been claimed that science is in crisis. I was recently interviewed about this by freelance journalist Cathy Casseta.

Can you explain what it means for science to be self-correcting?

Strictly speaking, science refers to an approach to determine objective truths using the scientific method that involves making observations and testing hypotheses about those observations through controlled experiments. Scientific facts are never immutable—they’re probabilistic explanations that are only as good as the replicable observations upon which they’re based. Research means to re-search—to look again and repeat our observations, controlling for other potential explanations. When we have new observations that differ from previous ones, we have to resolve those differences and change our explanations. That’s the iterative and self-correcting feature of science.

Why do you think the general public might interpret science self-correcting as science being untrustworthy?

The use of data gathered in carefully controlled experiments to inform knowledge isn’t an intuitive way of doing things. Although we all have some cursory science education in school, it’s taught as a kind of separate subject rather than one that should be integrated together with the knowledge-seeking more generally. And no one—not even scientists—go about their daily lives applying the scientific method or performing controlled experiments to inform our beliefs.

On the contrary, people often try to make the data fit their beliefs, rather than the other way around. More often than not, beliefs aren’t formed based objective data but on subjective experience and the testimony of others. And people tend to have the most faith and feel most passionately about in things that are, in reality, the most uncertain.

Human beings also do better with absolutes and categorical distinctions than with a reality that involves uncertainty, ambiguity, and grey areas. Our actions often depend on a kind of unwarranted confidence about that reality that can sometimes lead us astray. So, when scientific explanations and conclusions shift in light of new data, the public often takes this to mean that because “science was wrong,” it’s completely unreliable or untrustworthy. But the flexibility to change in light of new data—the self-correcting feature—is actually science’s superpower.

What do you say to those who believe science is “in crisis” or “broken”?

This is where we get away from the true meaning of “science” as a process of acquiring knowledge. The surgeon and writer Atul Gawande noted, “few dismiss the authority of science… they dismiss the authority of the scientific community.” In keeping with this distinction, there’s nothing particularly broken about science as a process for getting at truth, but when we start talking about human beings and science as a social institution, we have to acknowledge that things like bias, conflicts of interest, and politics can and sometimes do get in the way of good science. That’s a reality of all human activity to which science is not immune.

There’s a saying in scientific research that “the conclusions giveth, but the methods taketh away” which echoes what I said above that scientific explanations are only as good as the data they’re based on and how well an experiment was designed and carried out. The replicability crisis in psychology demonstrates the perils of making sweeping generalizations based on a single study.

There’s also good science and junk science out there. Unfortunately, it can be difficult for people—especially those without scientific expertise themselves—to tell them apart. That’s the job of peer review as a quality control for published research, but it’s hardly an infallible process, so that bad science does slip through the cracks.

But with those concessions made, we should also be aware that claiming that science is in crisis is a propaganda tactic designed to undermine a legitimate institution of authoritative knowledge. If science is broken or in crisis, as some claim, then we don’t have to listen to scientists. We can then discount what they say about inconvenient truths like COVID-19 or climate change. But when people make such claims, they’re often arguing that people should listen to them instead—they’re saying, “don’t trust the scientists, listen to what I say and do what I tell you to do.”

Mistrust in authoritative sources of knowledge leaves us vulnerable to misinformation—that’s how we get to a place where people believe in falsehoods like that COVID-19 was caused by 5G networks or that vaccine campaigns are about microchipping people, or that the Earth is flat. To me, that’s a much bigger crisis than anything related to science.

How can scientists and journalists bolster trust in science?

In the US, overall trust—whether we’re talking about government, the media, or our fellow neighbor—has recently been at its lowest point going back some 70 years. In contrast, trust in the institution of science has actually been in quite good, holding steady and standing above other institutions of authority through the years. But that’s not to say there isn’t pushback, and some of it is coming disproportionately from those who identify as political conservatives. We’re also seeing the rise of populist movements across the world that, at their heart, represent a kind of revolt against institutions of authority and so-called “elites.”

To respond to that, we have to understand that bolstering trust is about earning trust—we can’t place the cart of trust before the horse of trustworthiness. That means that the institution of science has to do a better job of being transparent, minimizing conflicts of interest, and providing the public with a seat at the table where scientists are available for open discussion. It has to be less ivory tower and more grassroots, with science being encouraged among young people and within communities. And yes, we have to do a better job of communicating that the iterative process of scientific discovery isn’t a weakness, but a strength that sets it apart from dogma. We have to show people while it’s worth trusting the process that is science.

In terms of the media in today’s digital age, there are so many sources of information and a conflation of objective reporting and subjective opinion that people can always dismiss one informational source for another that matches what they want to believe. I call this “confirmation bias on steroids.” In that sense, the media has a tougher task ahead of it than science does in terms of regaining trust. It has to find a way back to objective reporting, bearing in mind that whatever a journalist writes, someone else is probably somewhere else writing the opposite.

As a society, we need to do a better job of teaching the public to become better consumers of disparate sources of information. Like with science, the solution probably involves an educational reboot from the ground up—teaching people the skills to sift through bias and to find objective truths at an early age.

Portions of this interview were included in an article at Very Well Mind.